A constrained optimization problem is a problem of the form

maximize (or minimize) the function ![]() subject

to the condition

subject

to the condition ![]() .

.

In some cases one can solve for ![]() as a function of

as a function of ![]() and

then find the extrema of a one variable function.

and

then find the extrema of a one variable function.

That is, if the equation ![]() is equivalent to

is equivalent to ![]() ,

then we may set

,

then we may set

![]() and then find the values

and then find the values ![]() for which

for which ![]() achieves an extremum. The extrema of

achieves an extremum. The extrema of ![]() are

at

are

at ![]() .

.

Find the extrema of

![]() subject to

subject to

![]() .

.

We solve

![]() . Set

. Set

![]() .

Differentiating we have

.

Differentiating we have

![]() .

Setting

.

Setting ![]() , we must solve

, we must solve

![]() , or

, or

![]() . Differentiating again,

. Differentiating again,

![]() so that

so that

![]() which shows that

which shows that

![]() is a relative minimum of

is a relative minimum of ![]() and

and

![]() is a relative minimum of

is a relative minimum of ![]() subject to

subject to ![]() .

.

Find the extrema of

![]() subject to

subject to

![]() .

.

Using the quadratic formula, we find

Substituting the above expression for ![]() in

in ![]() we

must find the extrema of

we

must find the extrema of

and

and

Setting ![]() (respectively,

(respectively, ![]() ) we find

) we find

![]() in each case. So the potential extrema are

in each case. So the potential extrema are

![]() and

and ![]() .

.

Evaluating at ![]() , we see that

, we see that ![]() so that

so that ![]() is a

relative minimum and as

is a

relative minimum and as ![]() ,

, ![]() is a relative maximum.

(even though

is a relative maximum.

(even though

![]() !)

!)

If ![]() is a (sufficiently smooth) function in two

variables and

is a (sufficiently smooth) function in two

variables and ![]() is another function in two variables,

and we define

is another function in two variables,

and we define

![]() , and

, and

![]() is a relative extremum of

is a relative extremum of ![]() subject to

subject to ![]() , then

there is some value

, then

there is some value ![]() such that

such that

![]() .

.

Find the extrema of the function

![]() subject to the constraint

subject to the constraint

![]() .

.

Set

![]() . Then

. Then

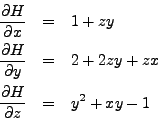

Setting these equal to zero, we see from the third equation

that ![]() , and from the first equation that

, and from the first equation that

![]() , so that from the second equation

, so that from the second equation

![]() implying that

implying that ![]() . From the third equation, we obtain

. From the third equation, we obtain ![]() .

.

Find the potential extrema of

the function

![]() subject

to the constraint that

subject

to the constraint that

![]() .

.

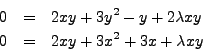

| (1) | |||

| (2) | |||

| (3) |

Multiplying the first line by ![]() and the second by

and the second by ![]() we obtain:

we obtain:

Subtracting, we have

As

![]() , we conclude that

, we conclude that ![]() . Substituting,

we have

. Substituting,

we have

![]() .

.

So the potential extrema are at ![]() or

or

![]() .

.